The Seductive Slippery Slope of using Science to Predict “Bad” Behavior

Incredibly, there are still researchers who believe we can use advanced technologies to predict “good” and “bad” behavior

In 2018, I wrote extensively about using technology to predict behavior — especially criminal intent and a propensity toward “bad” behavior — in the book Films from the Future .

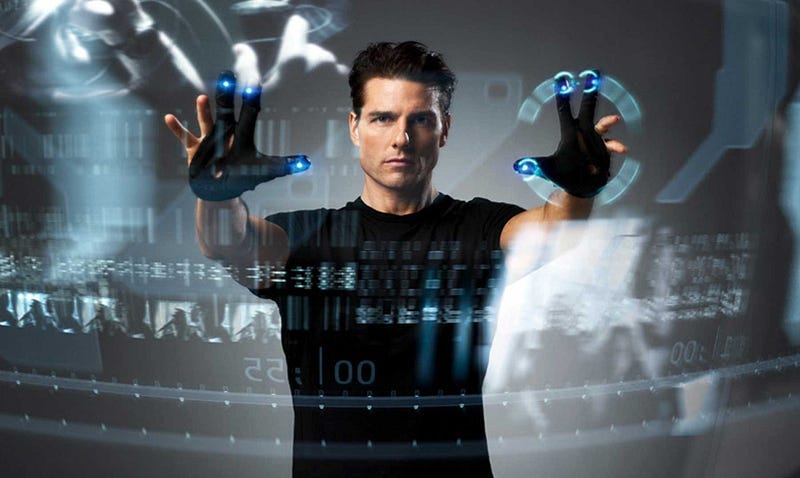

Given renewed interest in this area, I thought it worth posting a few relevant excerpts from the book here. These are from chapter four, which was inspired by the movie Minority Report. While this film uses a fantastical plot device (“precogs” able to predict future murders) it nevertheless provides a rich backdrop against which to explore the dangers of assuming that technology can be used to sift out “good” people from “bad.”

The “Science” of Predicting Bad Behavior

In March 2017, the British newspaper The Guardian ran an online story with the headline “Brain scans can spot criminals, scientists say.” Unlike in Minority Report, the scanning was carried out using a hefty functional magnetic resonance imaging (fMRI) machine, rather than genetically altered precogs. But the story seemed to suggest that scientists were getting closer to spotting criminal intent before a crime had been committed, using sophisticated real-time brain imaging.

In this case, the headline vastly overstepped the mark. The original research used fMRI to see if brain activity could be used to distinguish knowingly criminal behavior from merely reckless behavior. It did this by setting up a somewhat complex situation, where volunteers were asked to take a suitcase containing something valuable through a security checkpoint while undergoing a brain scan. But to make things more interesting (and scientifically useful), their actions and choices came with financial rewards

and consequences.

The study suggested brain activity could be used to indicate what might be considered as criminal intent, and this is what threw headline writers into a clickbait frenzy. But the research was far from conclusive. In fact, the authors explicitly stated that “it would be absurd to suggest, in light of our results, that the task of assessing the mental state of a defendant could or should, even in principle, be reduced to the classification of brain data.” They also pointed out that, even if these results could be used to predict the mental state of a person while committing a crime, they’d have to be inside an fMRI scanner at the time, which would be tricky.

Despite the impracticality of using this research to assess the mental state of people during the act of committing a crime, media stories around the study tapped into a deep-seated fascination with predicting criminal tendencies or intent. Yet this is not a new fascination, and neither is the use of science to justify its indulgence.

In the seventeenth century, a very different “science” of predicting criminal tendencies was all the rage: phrenology. Phrenology was an attempt to predict someone’s character and behavior by the shape of their skull. As understanding around how the brain works developed, the practice became increasingly discredited. Sadly, though, it laid a foundation for assumptions that traits which appear to be common to people of “poor character” are also predictive of their behavior — a classic case of correlation erroneously being confused with causation. And it foreshadowed research that continues to this day to connect what someone looks like with how they might act.

Despite its roots in pseudoscience, the ideas coming out of phrenology were picked up by the nineteenth-century criminologist Cesare Lombroso. Lombroso was convinced that physical traits such as jaw size, forehead slope, and ear size were associated with criminal tendencies. His theory was that these and other traits were throwbacks to earlier evolutionary ancestors, and that they indicated an innate tendency toward criminal behavior.

It’s not hard to see how attractive these ideas might have been to some, as they suggested criminals could be identified and dealt with before breaking the law. With hindsight, it’s easy to see how misguided and malevolent they were, but at the time, many people bought into them. It would be nice to think that this way of thinking about criminal tendencies was a short and salutary aberration in humanity’s history. Sadly, though, it paved the way to even more divisive forms of pseudoscience-based discrimination, including eugenics.

In the 1900s, discrimination that was purportedly based on scientific evidence shifted toward the idea that the quality or “worth” of a person is based on their genetic heritage. The “science” of eugenics — and sadly this is something that many scientists at the time supported — suggested that our genetic heritage determines everything about us, including our moral character and our social acceptability. It was a deeply flawed concept that, nevertheless, came with the same seductive idea that, if we know what makes people “bad,” we can remove them from society before they cause a problem. What is heartbreaking is that these ideas coming from academics and scientists gained political momentum, and ultimately became part of the justification for the murder of six million Jews, and many others besides, in the Holocaust.

These days, I’d like to think we’re more enlightened, and that we don’t fall prey so easily to using scientific flights of fancy to justify how we treat others. Unfortunately, this doesn’t seem to be the case.

In 2011, three researchers published a paper suggesting that you can tell a criminal from someone who isn’t (and, presumably by inference, someone who is likely to engage in criminal activities) by what they look like. In the study, thirty-six students in a psychology class (thirty-three women and three men) were shown mug shots of thirty-two Caucasian males. They were told that some were criminals, and they were asked to assess — from the photos alone — whether each person had committed a crime; whether they’d committed a violent crime; if it was a violent crime, whether it was rape or assault; and if it was non-violent, whether it was arson or a drug offense.

Within the limitations of the study, the participants were more likely to correctly identify criminals than incorrectly identify them from the photos. Not surprisingly, perhaps, this led to a slew of headlines along the lines of “Criminals Look Different From Non-criminals” (this one from a blog post on Psychology Today). But despite this, the results of the study are hard to interpret with any degree of certainty. It’s not clear what biases may have been introduced, for instance, by having the photos evaluated by a mainly female group of psychology students, or by only using photos of white males, or even whether there was something associated with how the photos were selected and presented, and how the questions were asked, that influenced the results.

The results did seem to indicate that, overall, the students were successful in identifying photos of convicted criminals in this particular context. But the study was so small, and so narrowly defined, that it’s hard to draw any clear conclusions from it. However, there is a larger issue at stake with this and similar studies, and this is the ethical issue with carrying out and publicizing the results of such research in the first place. Here, the very appropriateness of asking if we can predict criminal behavior brings us back to the earlier study on intent versus reckless behavior, and to the underlying premise in Minority Report.

The assumption that someone’s behavioral tendencies can be predicted from no more than what they look like, or how their brain functions, is a slippery slope. It assumes — dangerously so — that behavior is governed by genetic heritage and upbringing. But it also opens the door to a better-safe-than-sorry attitude to law and order that considers it better to restrain someone who might demonstrate socially undesirable behavior than to presume them innocent until proven guilty. And it’s an attitude that takes us down a path where we assume that other people do not have agency over their destiny. There is an implicit assumption here that how we behave can be separated out into “good” and “bad,” and that there is consensus on what constitutes these. But this is a deeply flawed assumption.

What the behavioral research above is actually looking at is someone’s tendency to break or bend agreed-on rules of socially acceptable conduct, as these are codified in law. These laws are not an absolute indicator of good or bad behavior. Rather, they are a result of how we operate collectively as a social species. In technical terms, they establish normative expectations of behavior, which simply means that most people comply with them, irrespective of whether they have moral or ethical value. For instance, in most cultures, it’s accepted that killing someone should be punished, unless it’s in the context of a legally sanctioned war or execution (although many societies would still consider this morally reprehensible). This is a deeply embedded norm, and most people would consider it to be a good guide of appropriate behavior. The same cannot be said of “norms” surrounding homosexual acts, though, which were illegal in the United Kingdom until 1967,

and are still illegal in some countries around the world, or others surrounding LGBTQ rights, or even women’s rights.

When social norms are embedded within criminal law, it may be possible to use physical features or other means to identify “criminals” or those likely to be involved in “criminal” behavior. But are we as a society really prepared to take preemptive action against people who we arbitrarily label as “bad”? I sincerely hope not. And here we get to the crux of the ethical and moral challenges around predicting criminal intent. Even if we can predict tendencies from images alone — and I am highly skeptical that we can gain anything of value here that isn’t heavily influenced by researcher bias and social norms — should we? Is it really appropriate to be asking if

we can predict, simply from how someone looks, whether they are likely to behave in a way that we think is appropriate or not? And is it ethical to generate data that could be used to discriminate against people based on their appearance?

Using facial features to predict tendencies puts us way down the slippery slope toward discriminating against people because they are different from us. Thankfully, this is an idea that many would dismiss as inappropriate these days. But, worryingly, our interest in relating brain activity to behavioral traits — the high-tech version of “looks like a criminal” — puts us on the same slippery slope.

Criminal Brain Scans

Unlike photos, functional Magnetic Resonance Imaging allows researchers to directly monitor brain activity, and to do it in real time. It works by monitoring blood flow to different parts of the brain, and using this to pinpoint which parts of someone’s brain are active at any one point in time.

One of the beauties of fMRI is that it can map out brain activity as people are thinking about and processing the world around them. For instance, it can show which parts of a subject’s brain are triggered if they’re shown a photo of a donut, if they are happy, or sad, or angry, or what their brain activity looks like if they’re given the opportunity to take a risk.

fMRI has opened up a fascinating window into how we think about and respond to our surroundings, and in some cases, what we think. And it’s led to some startling revelations. We now know, for instance, that we often unconsciously decide what we’re going to do several seconds before we’re actually aware of making a decision. Recent research has even indicated that high-resolution fMRI scans on primates can be used to decode what the animals are seeing. The researchers were, quite literally, reading these primates’ minds.

This is quite incredible science. And not surprisingly, it’s leading to a revolution in understanding how our brains operate. This includes developing a better understanding of how certain brain behaviors can lead to debilitating medical conditions. It’s also leading to a deeper understanding of how the mechanics of our brain determine who we are, and how we behave.

That said, there’s still considerable skepticism around how effective a tool fMRI is and how robust some of its findings are. It’s also fair to say that some of these findings challenge deeply held beliefs about many of the things we hold dear, including the nature of free will, moral choice, kindness, compassion, and empathy. These are all aspects of ourselves that help define who we are as a person. Yet, with the advent of fMRI and other neuroscience-based tools, it sometimes feels like we’re teetering on the precipice of realizing that who we think we are — our sense of self, or our “soul” if you like — is merely an illusion of our biology.

This in itself raises questions over the degree to which neuroscience is racing ahead of our ability to cope with what it reveals. Yet the reality is that this science is progressing at breakneck speed, and that fMRI is allowing us to dive ever deeper behind our outward selves — our facial features and our easily observed behaviors — and into the very fabric of the organ that plays such a role in defining us. And, just like phrenology and eugenics before it, it’s opening up the temptation to interpret how our brains operate as a way to predict what sort of person we are, and what we might do.

In 2010, researchers provided a group of subjects with advice on the importance of using sunscreen every day. At the same time, the subjects’ brain activity was monitored using fMRI. It’s just one of many studies that are increasingly trying to use real-time brain activity monitoring to predict behavior.

In the sunscreen study, the subjects were asked how likely they were to take the advice they were given. A week later, researchers checked in with them to see how they’d done. Using the fMRI scans, the researchers were able to predict which subjects were going to use sunscreen and which were not. But more importantly, using the scans, the researchers discovered they were better at predicting how the subjects would behave than they themselves were. In other words, the researchers knew their subjects’ minds better than they did.

Research like this suggests that our behavior is determined by measurable biological traits as much as by our free will, and it’s pushing the boundaries of how we understand ourselves and how we behave, both as individuals and as a society. And, while science will never enable us to predict the future in the same way as Minority Report’s precogs, it’s not too much of a stretch to imagine that fMRI and similar techniques may one day be used to predict the likelihood of someone engaging in antisocial and morally questionable behavior.

But even if predicting behavior based on what we can measure is potentially possible, is this a responsible direction to be heading in?

The problem is, just as with research that tries to tie facial features, head shape, or genetic heritage to a propensity to engage in criminal behavior, fMRI research is equally susceptible to human biases. It’s not so much that we can collect data on brain activity that’s problematic; it’s how we decide what data to collect, and how we end up interpreting and using it, that’s the issue.

A large part of the challenge here is understanding what the motivation is behind the research questions being asked, and what subtle underlying assumptions are nudging a complex series of scientific decisions toward results that seem to support these assumptions.

Here, there’s a danger of being caught up in the misapprehension that the scientific method is pure and unbiased, and that it’s solely about the pursuit of truth. To be sure, science is indeed one of the best tools we have to understand the reality of how the world around us and within us works. And it is self-correcting — ultimately, errors in scientific thinking cannot stand up to the scrutiny the scientific method exposes them to. Yet this self-correcting nature of science takes time, sometimes decades or centuries. And until it self-corrects, science is deeply susceptible to human foibles, as phrenology, eugenics, and other misguided ideas have all too disturbingly shown.

This susceptibility to human bias is greatly amplified in areas where the scientific evidence we have at our disposal is far from certain, and where complex statistics are needed to tease out what we think is useful information from the surrounding noise. And this is very much the case with behavioral studies and fMRI research. Here, limited studies on small numbers of people that are carried out under constrained conditions can lead to data that seem to support new ideas. But we’re increasingly finding that many such studies aren’t reproducible, or that they are not as generalizable as we at first thought. As a result, even if a study does one day suggest that a brain scan can tell if you’re likely to steal the office paper clips, or murder your boss, the validity of the prediction is likely to be extremely suspect, and certainly not one that has any place in informing legal action—or any form of discriminatory action—before any crime has been committed.

Machine Learning-Based Precognition

Just as in Minority Report, the science and speculation around behavior prediction challenges our ideas of free will and justice. Is it just to restrict and restrain people based on what someone’s science predicts they might do? Probably not, because embedded in the “science” are value judgments about what sort of behavior is unwanted, and what sort of person might engage in such behavior. More than this, though, the notion of pre-justice challenges the very idea that we have some degree of control over our destiny. And this in turn raises deep questions about determinism versus free will. Can we, in principle, know enough to fully determine someone’s actions and behavior ahead of time, or is there sufficient uncertainty and unpredictability in the world to make free will and choice valid ideas?

In Chapter Two and Jurassic Park, we were introduced to the ideas of chaos and complexity, and these, it turns out, are just as relevant here. Even before we have the science pinned down, it’s likely that the complexities of the human mind, together with the incredibly broad and often unusual panoply of things we all experience, will make predicting what we do all but impossible. As with Mandelbrot’s fractal, we will undoubtedly be able to draw boundaries around more or less likely behaviors. But within these boundaries, even with the most exhaustive measurements and the most powerful computers, I doubt we will ever be able to predict with absolute certainty what someone will do in the future. There will always be an element of chance and choice that determines our actions.

Despite this, the idea that we can predict whether someone is going to behave in a way that we consider “good” or “bad” remains a seductive one, and one that is increasingly being fed by technologies that go beyond fMRI.

In 2016, two scientists released the results of a study in which they used machine learning to train an algorithm to identify criminals based on headshots alone. The study was highly contentious and resulted in a significant public and academic backlash, leading the paper’s authors to state in an addendum to the paper, “Our work is only intended for pure academic discussions; how it has become a media consumption is a total surprise to us.”

Their work hit a nerve for many people because it seemed to reinforce the idea that criminal behavior is something that can be predicted from measurable physiological traits. But more than this, it suggested that a computer could be trained to read these traits and classify people as criminal or non-criminal, even before they’ve committed a crime.

The authors vehemently resisted suggestions that their work was biased or inappropriate, and took pains to point out that others were misinterpreting it. In fact, in their addendum, they point out, “Nowhere in our paper advocated the use of our method as a tool of law enforcement, nor did our discussions advance from correlation to causality.”

Nevertheless, in the original paper, they conclude: “After controlled for race, gender and age, the general law-biding [sic] public have facial appearances that vary in a significantly lesser degree than criminals.” It’s hard to interpret this as anything other than a conclusion that machines and artificial intelligence could be developed that distinguish between people who have criminal tendencies and those who do not.

Part of why this is deeply disturbing is that it taps into the issue of “algorithmic bias” — our ability to create artificial-intelligence-based apps and machines that reflect the unconscious (and sometimes conscious) biases of those who develop them. Because of this, there’s a very real possibility that an artificial judge and jury that relies only on what you look like will reflect the prejudices of its human instructors.

This research is also disturbing because it takes us out of the realm of people interpreting data that may or may not be linked to behavioral tendencies, and into the world of big data and autonomous machines. Here, we begin to enter a space where we have not only trained computers to do our thinking for us, but we no longer know how they’re thinking. In a worrying twist of irony, we are using our increasing understanding of how the human brain works to develop and train artificial brains that we are increasingly ignorant of the inner workings of.

In 2003, a group of entrepreneurs set up the company Palantir, named after J. R. R. Tolkien’s seeing-stones in Lord of the Rings. The company excels at using big data to detect, monitor, and predict behavior, based on myriads of connections between what is known about people and organizations, and what can be inferred from the information that’s available. The company largely flew under the radar for many years, working with other companies and intelligence agencies to extract as much information as possible

out of massive data sets. But in recent years, Palantir’s use in “predictive policing” has been attracting increasing attention. And in May 2018, the grassroots organization Stop LAPD Spying Coalition released a report raising concerns over the use of Palantir and other technologies by the Los Angeles Police Department for predicting where crimes are likely to occur, and who might commit them.

Palantir is just one of an increasing number of data collection and analytics technologies being used by law enforcement to manage and reduce crime. In the US, much of this comes under the banner of the “Smart Policing Initiative,” which is sponsored by the US Bureau of Justice Assistance. Smart Policing aims to develop and deploy “evidence-based, data-driven law enforcement tactics and strategies that are effective, efficient, and economical.” It’s an initiative that makes a lot of sense, as evidence-based and data- driven crime prevention is surely better than the alternatives. Yet there’s growing concern that, without sufficient due diligence, seemingly beneficial data and AI-based approaches to policing could easily slip into profiling and “managing people” before they commit a criminal act. Here, we’re replacing Minority Report’s precogs with massive data sets and AI algorithms, but the intent is remarkably similar: Use every ounce of technology we have to predict who might commit a crime, and where and when, and intervene to prevent the “bad” people causing harm.

Naturally, despite the benefits of data-driven crime prevention (and they are many), irresponsible use of big data in policing opens the door to unethical actions and manipulation, just as is seen in Minority Report. Yet here, real life is perhaps taking us down an even more worrying path.

One of the more prominent concerns raised around predictive policing is the dangers of human bias swaying data collection and analysis. If the designers of predictive policing systems believe they know who the “bad people” are, or even if they have unconscious biases that influence their perceptions, there’s a very real danger that crime prevention technologies end up targeting groups and neighborhoods that are assumed to have a higher tendency toward criminal behavior. This was at the center of the Stop LAPD Spying Coalition report, where there were fears that “black, brown, and poor” communities were being disproportionately targeted, not because they had a greater proportion of likely criminals, but because the predictive systems had been trained to believe this. An here, there are real dangers that predictive policing systems will end up targeting people who are assumed to have bad tendencies, whether they do or not.

The hope is, of course, that we learn to wield this tremendously powerful technology responsibly and humanely because, without a doubt, if it’s used wisely, big data could make our lives safer and more secure. But this hope has to be tempered by our unfailing ability to delude ourselves in the face of evidence to the contrary, and to justify the unethical and the immoral in the service of an assumed greater good.

From “Films from the Future: The Technology and Morality of Sci-Fi Movies.” Published by Mango Publishing, November 2018